We are at ICRA 2026 in booth 91, and we are looking forward to a few days in Vienna for research discussions, technical conversations, and reconnecting with the community.

ICRA has always been a favorite of ours. It brings together researchers from very different domains, but despite different research goals, many face similar questions around how to build reliable experiments, iterate quickly, and evaluate results in a repeatable way.

Many of the improvements in the Crazyflie ecosystem over the years have started exactly there. Someone stops by the booth, describes a challenge in their lab, and shortly thereafter a feature, library, or hardware addition begins to take shape.

This year we are bringing a few things we are excited to discuss.

Towards larger and easier-to-manage swarms

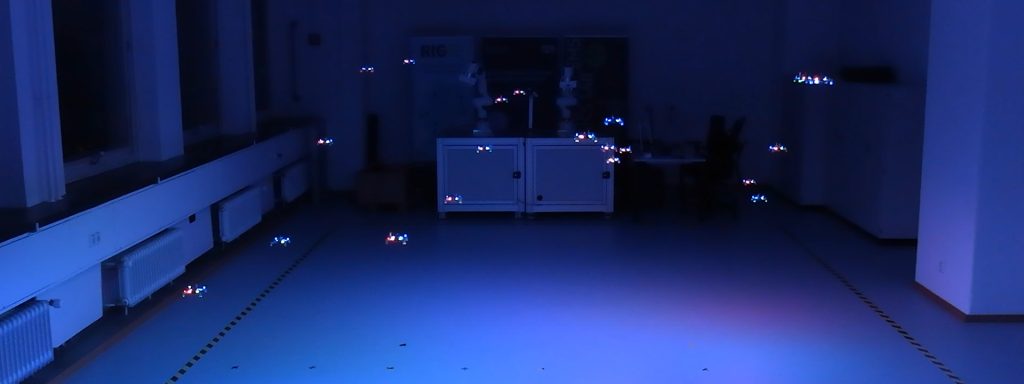

Over the last months we have spent time improving how larger groups of Crazyflies behave and scale in practice.

Swarm experiments sometimes start small. Then a few drones become ten, ten become fifty, and suddenly radio communication and system overhead become part of the research problem itself.

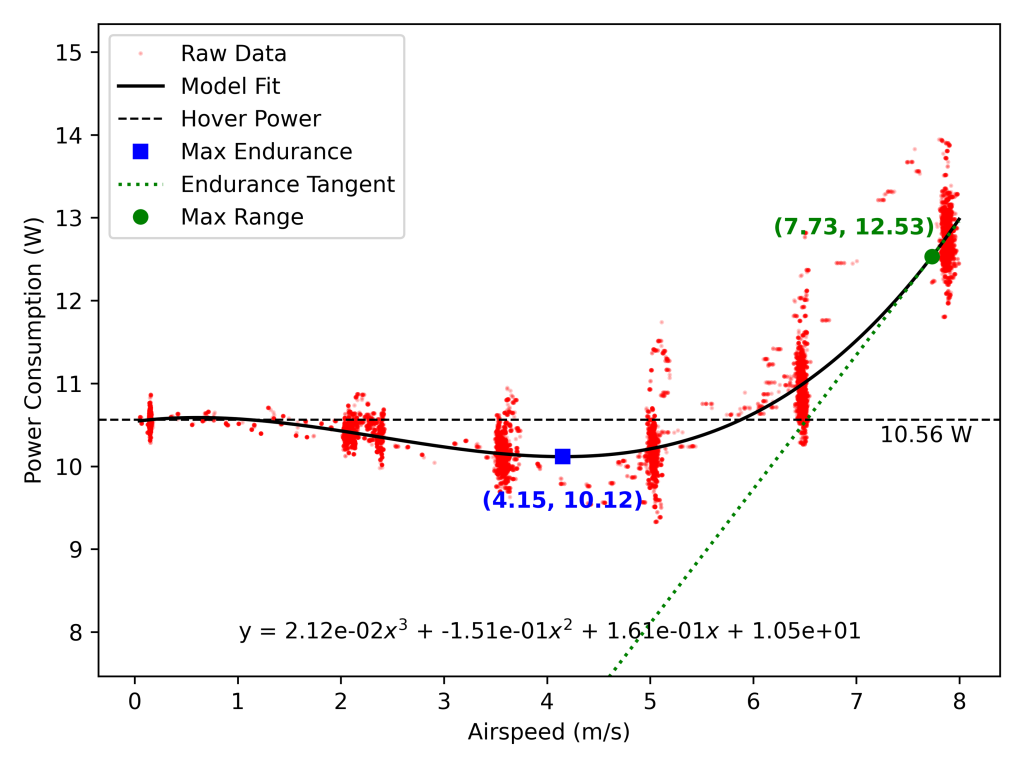

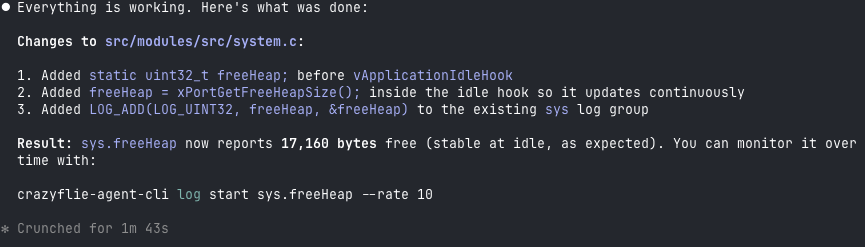

Recent work around overall system performance has significantly improved how many Crazyflies can be handled from a single radio, reducing communication bottlenecks and making larger coordinated experiments easier to run. We are also working toward improved tooling and workflows for managing swarms, with the goal of reducing setup complexity and making experiments easier to reproduce. A transition to Rust has helped reduce connection overhead and improve responsiveness in larger systems.

We are looking forward to discussing where this work is heading and hearing what challenges researchers encounter in their own systems.

Tech talk: “A Swarm Welcome to New Aerial Robotics Functionality”

Wednesday, June 3rd, 12:45 CEST, at the Tech Talk Stage in Hall C7

We will give a tech talk focused on recent work around swarm functionality and capabilities.

The session will cover improvements that significantly expand what can be done with larger Crazyflie groups and how recent developments are reducing practical limitations in swarm experimentation.

If your work involves multi-agent systems, collective behaviors, or coordinated aerial robotics, stop by and continue the discussion afterward.

Demonstration: SwarmGPT and human interaction with robot swarms

On Wednesday afternoon, together with the Learning Systems and Robotics Lab (LSY) at the Technical University of Munich, we will demonstrate SwarmGPT running on the Crazyflie.

Some of you may remember the end-of-year collaboration a few months ago. This time we are bringing a more interactive version of the concept. SwarmGPT explores how natural-language intent can be translated into coordinated swarm behaviors. Rather than manually programming trajectories, users can pick a piece of music and prompt desired expression, and leave planning and safety mechanisms to the system to execute on.

Come by our booth and try it for yourselves.

Color, Rust, camera deck, and more

We will also bring several smaller developments and ongoing efforts that we have discussed on the blog over recent months.

Some of these include:

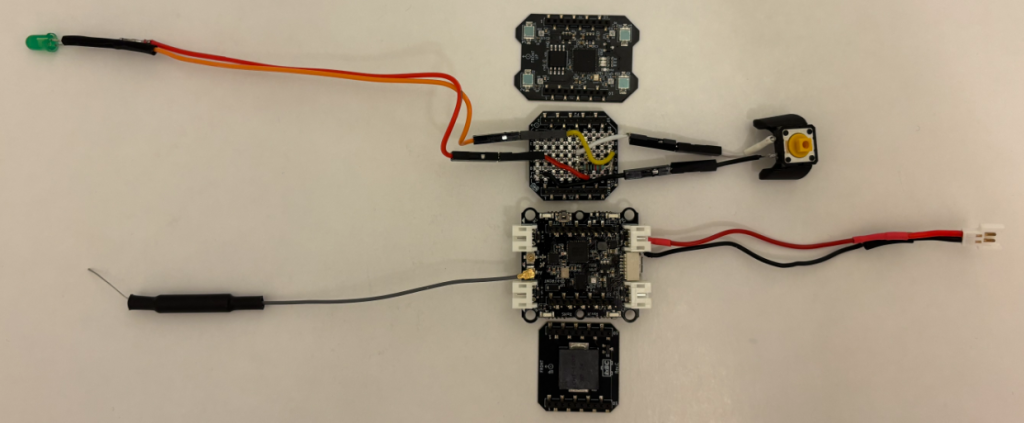

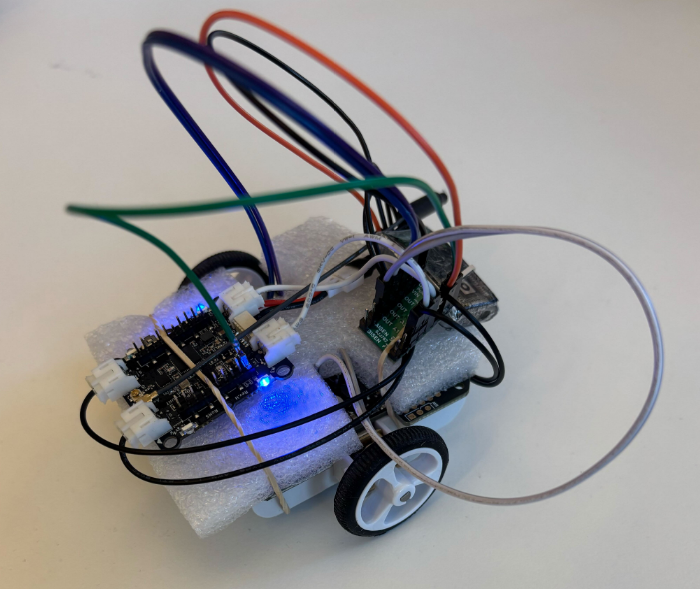

- Continued work on Rust

- Improved radio performance for larger groups of drones

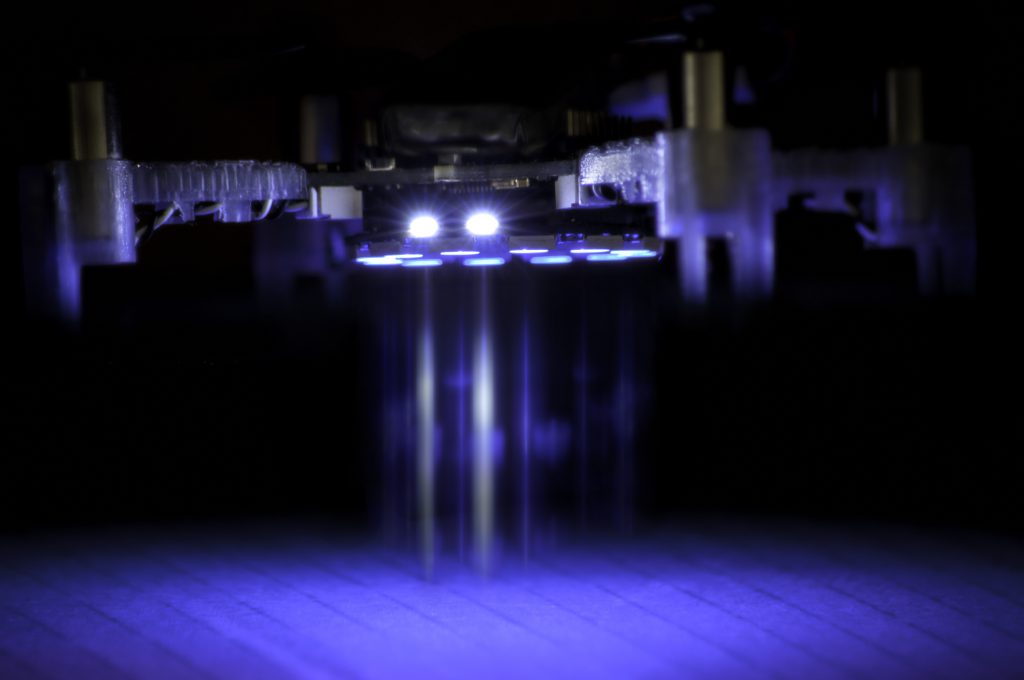

- The now available Color LED Deck

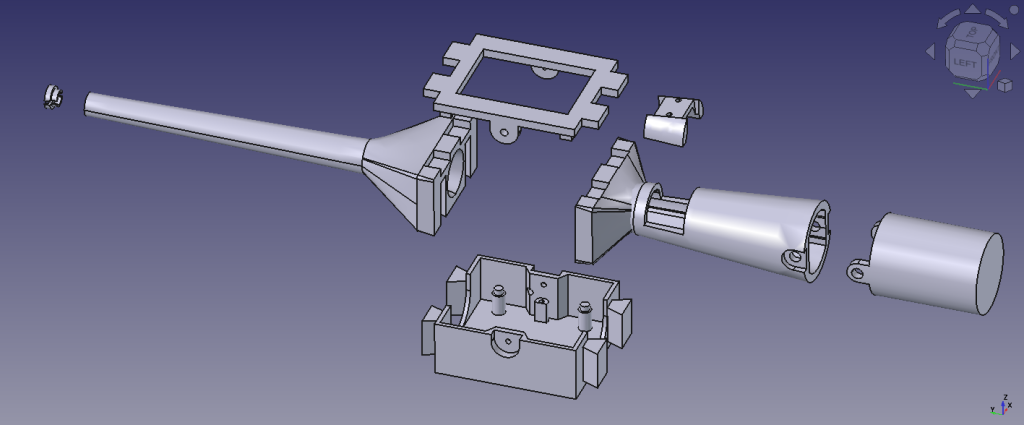

- Early version of the upcoming camera deck

- Discussions around improved swarm workflows and management support

The Color LED deck has turned out to be useful far beyond aesthetics. In swarm experiments, visible state information can help indicate timing, grouping, synchronization, and system behavior across multiple agents.

User survey

We will be running a community survey during ICRA. One of the strengths of the Crazyflie ecosystem is the breadth of research and experimentation happening around it. People use the platform in ways we never originally anticipated, often combining different hardware, software, and workflows depending on the research problem they are trying to solve.

The survey is an opportunity for us to better understand how the platform is being used today, and the feedback helps us make better decisions around priorities, tooling, documentation, hardware, and long-term roadmap direction.

We would greatly appreciate a few minutes of your time. Please complete the survey following this link.

Trade your poster with us

This has become a tradition. If you are presenting research involving Crazyflie and do not need your poster after your session, bring it by the booth.

We love collecting them and filling our office walls with the incredible range of work built on the platform. It is also one of our favorite ways of seeing where the community takes the Crazyflie next.

Bring your poster, and we will make sure you leave with a little Bitcraze treat in return.

Find us in booth 91

If you are working with Crazyflie already, considering it for your research, or simply want to discuss ideas around swarm robotics, autonomous flight, perception, AI, or experimental workflows, stop by booth 91.

We are looking forward to the conversations.