Today we will have a guest blogpost by Dominik Natter, working in the Robotics & Control group at SINTEF in Trondheim, Norway. Enjoy!!

In this blogpost we will teach you how to fly the Crazyflie beyond edges without crashing, using only on-board sensors. Come join in!

Introduction

UAVs have seen tremendous progress in the last decades and have since moved from research labs to various real-world environments. Small UAVs (so-called micro air vehicles, MAVs) like the Crazyflie open up even more possibilities. For example, their size allows them to traverse narrow passages or fly in cluttered environments (as recently showcased in this blog post). However, in order to achieve these complex tasks the community must further improve the cognitive ability of these MAVs in order to avoid crashes.

One task on this list and today’s topic is the possibility to fly at constant altitude irrespective of the terrain. This feature has been discussed in the community already two years ago. To understand the problem, let’s look at the currently implemented solution: With the Flow deck mounted the Crazyflie uses a 1D lidar sensor to estimate its vertical position. This vertical position (more or less) equals the current sensor reading. On flat floors this solution works very well. However, if the Crazyflie shall traverse through a narrow window or fly above irregular terrain its altitude will change based on the sensor readings. This can lead to unstable flights, as in the following video, or even crashes!

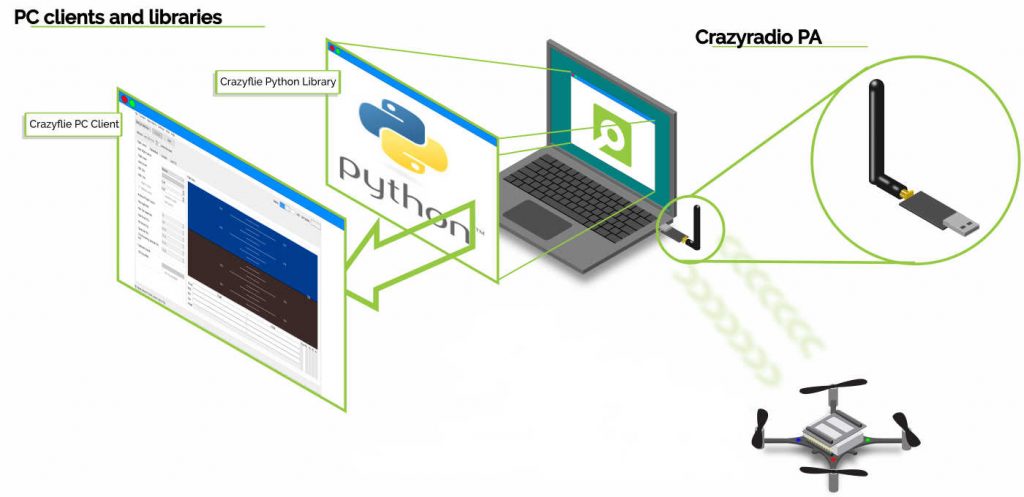

You might wonder: why not use any of the other great tools from the Bitcraze universe? Indeed, the Lighthouse positioning system and the Loco positioning system work well for absolute positioning (as we have seen earlier, e.g., in this blog post). However, the required setups are often not available in difficult environments. Alternatively, the barometer could be used to achieve a solution based solely on on-board sensors. In fact, Bitcraze has proposed an altitude hold functionality a few years ago. This is a cool feature, but its positioning accuracy of “roughly ±15cm” is not fully satisfying. Finally, relying on the on-board IMU alone will inevitably lead to drifting over time.

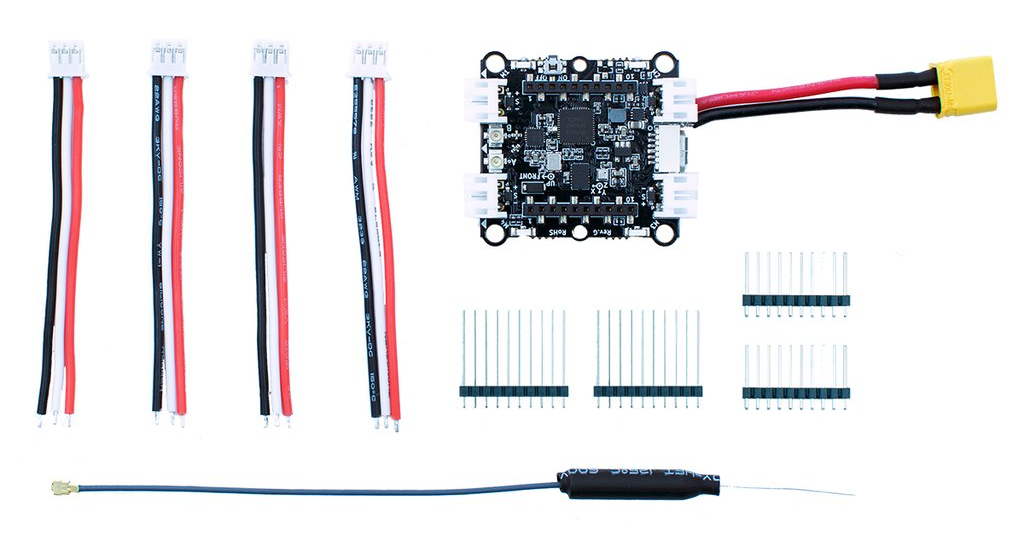

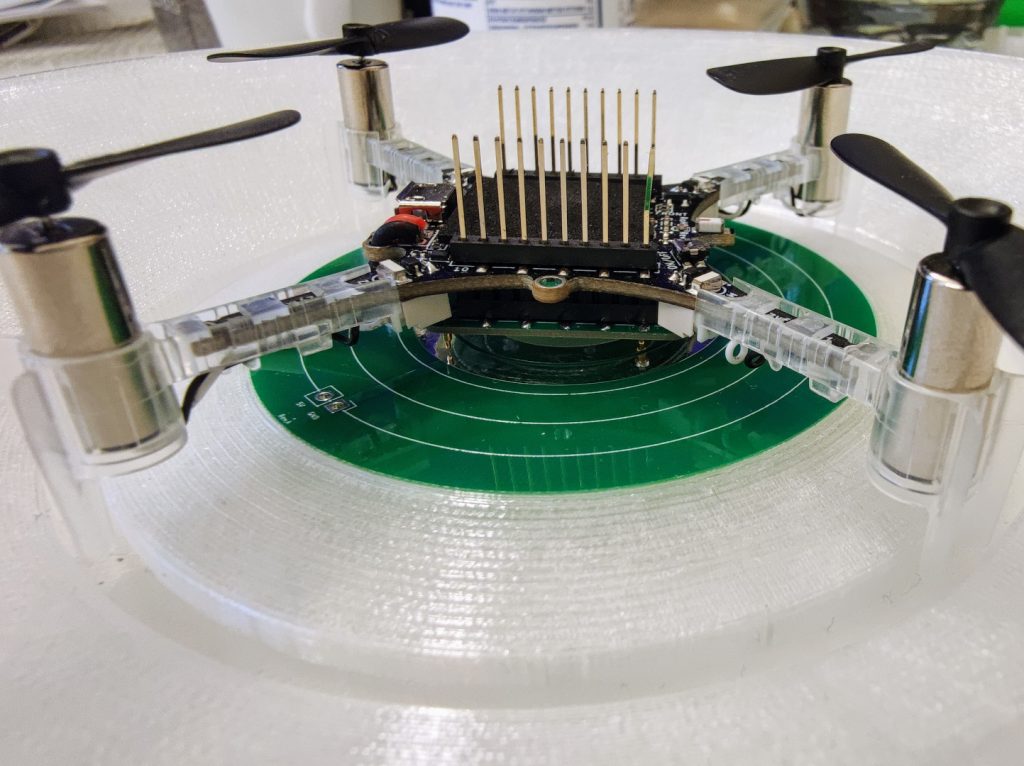

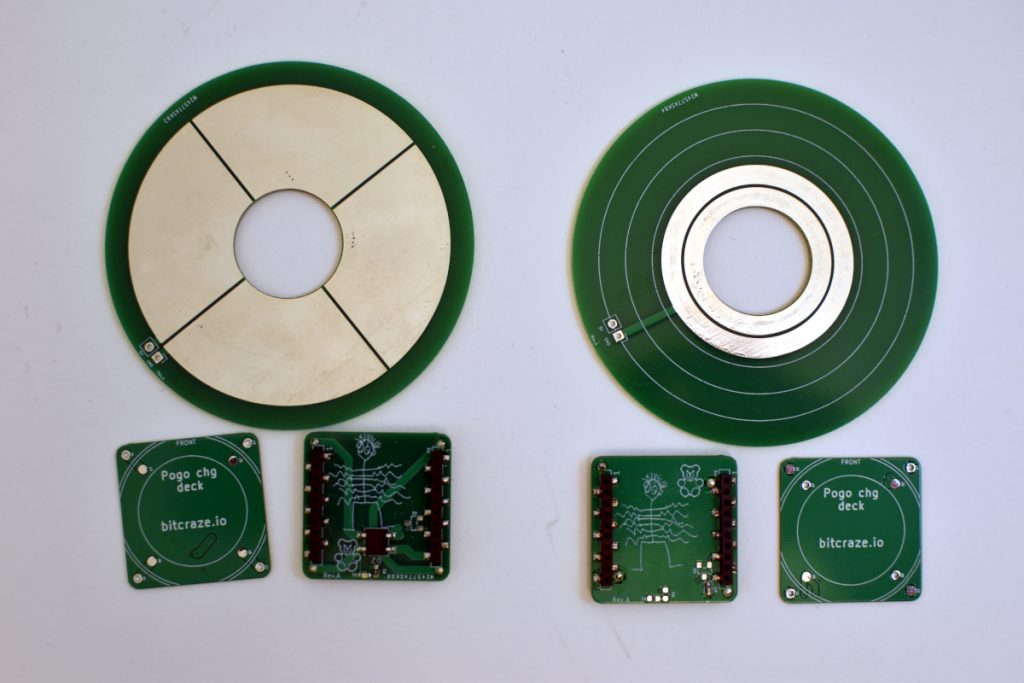

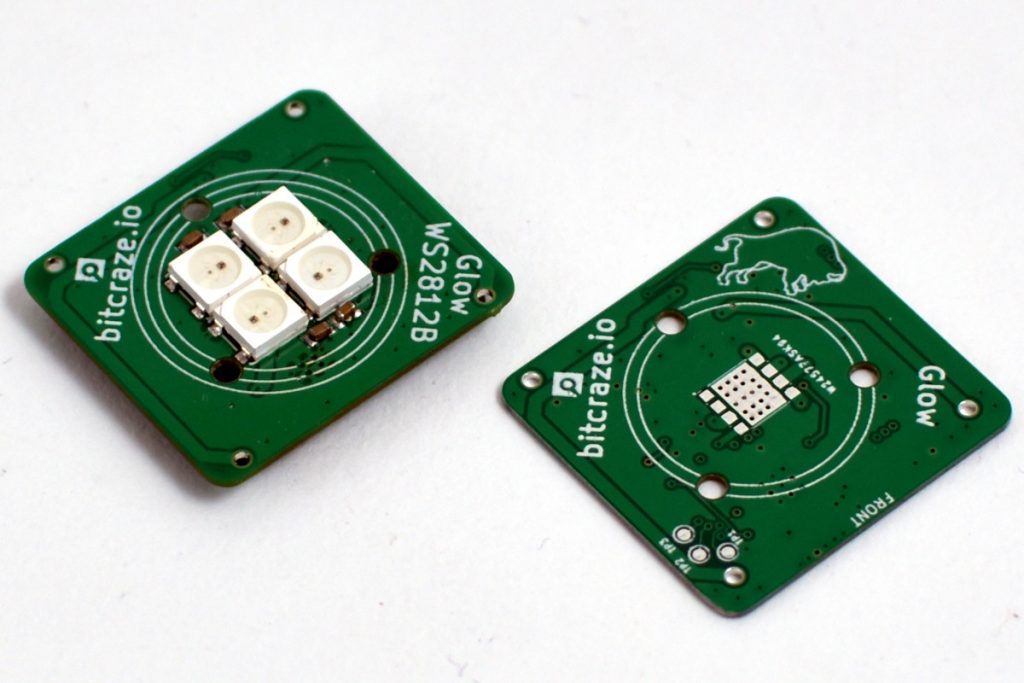

Thus, we propose a solution based on the Flow deck and the Multiranger deck. This approach, only based on on-board sensors, allows to fly at constant altitude with obstacles above, below, or even both above and below the Crazyflie. Kristoffer Skare developed this solution when he worked with us as an intern in 2021.

Technical Description

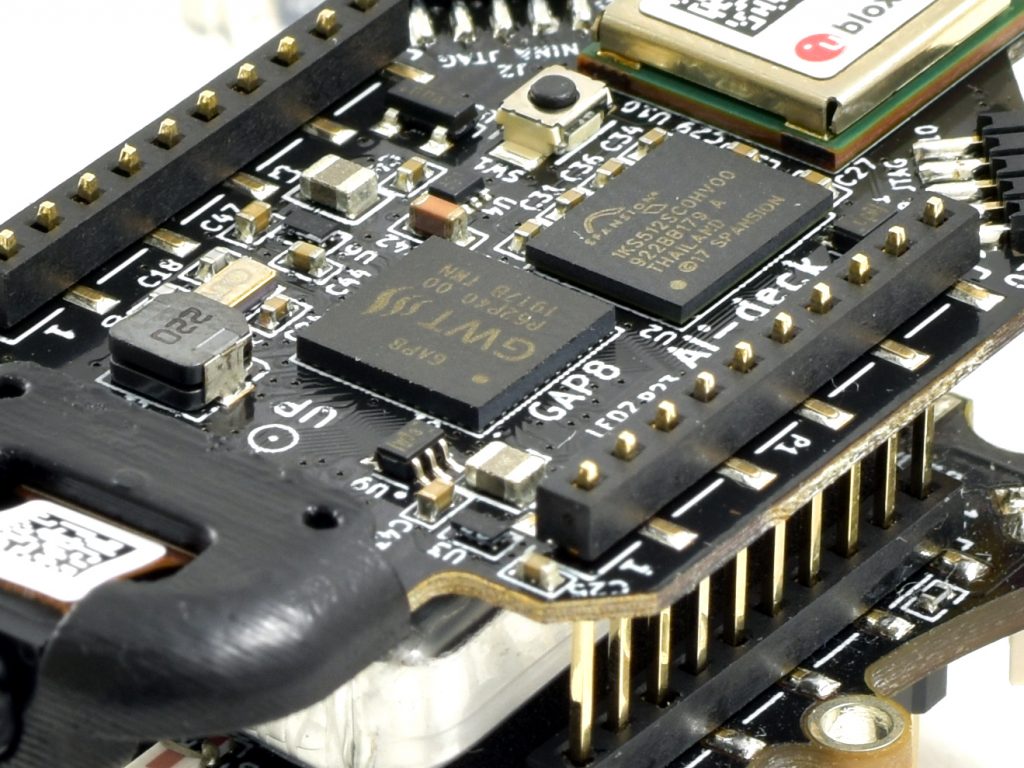

As a first step, the upward-facing lidar of the Multiranger deck is incorporated in the same way the downward-facing lidar of the Flow deck is used in the firmware. This additional measurement can then be used in the extended Kalman filter (EKF) to improve the state estimation. Currently, the EKF estimates and outputs 1 value for the altitude. For our purpose two more states are added to the EKF: one state is defined as the height of the object under the Crazyflie compared to the height where the altitude state is defined as 0. Similarly, the other state is defined as the height of the object above the Crazyflie compared to the same reference height. The Crazyflie keeps therefore track of the environment in order to keep its own altitude constant. To achieve this, an edge detection was implemented: The errors between the predicted and measured distance are tracked in both the upward or downward range measurement. If either of these errors is too large the algorithm assumes that the floor or roof has changed (while the original EKF would think the drone’s position has changed, triggering a change in thrust). Thus, the corresponding state gets updated. For more details on the technical implementation and the code itself, check out our pull request.

Results

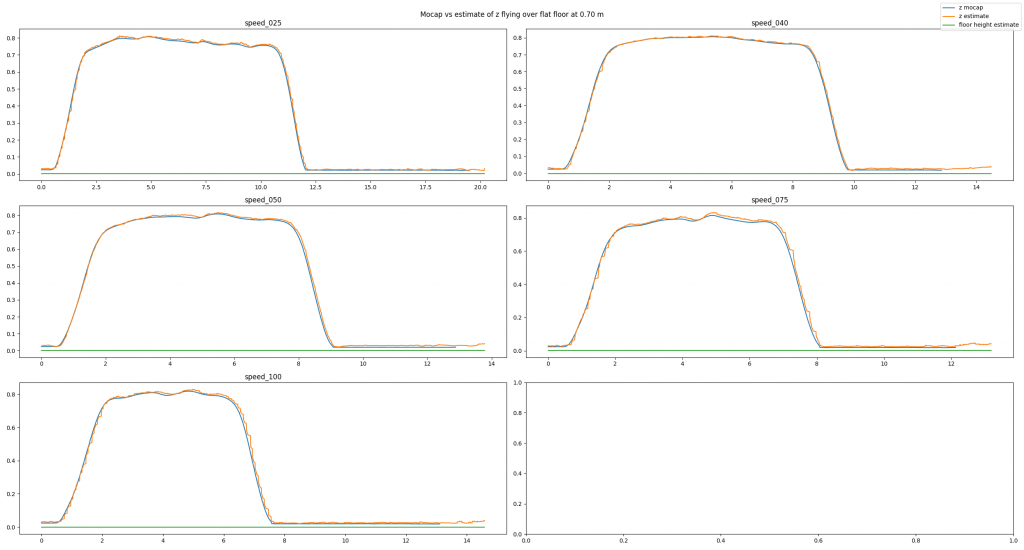

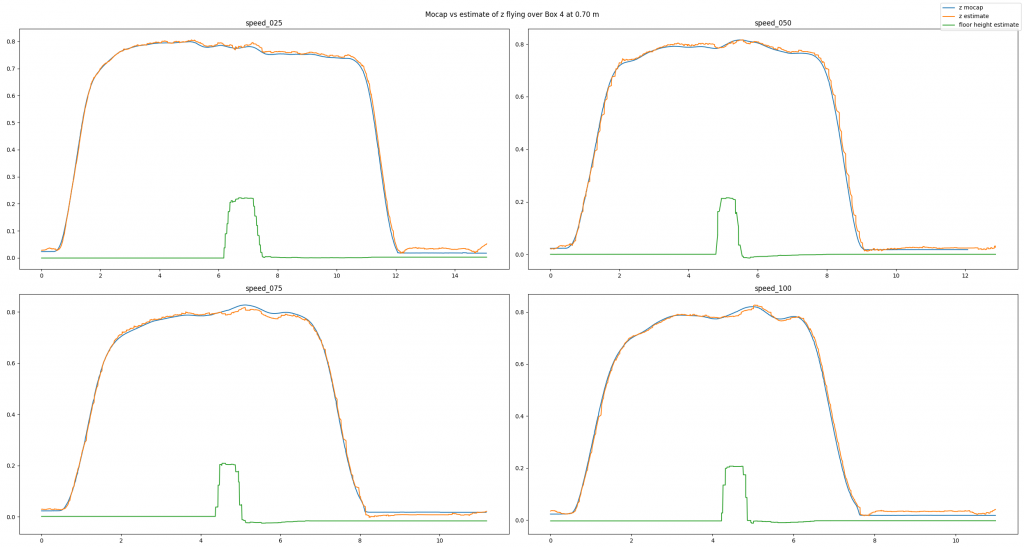

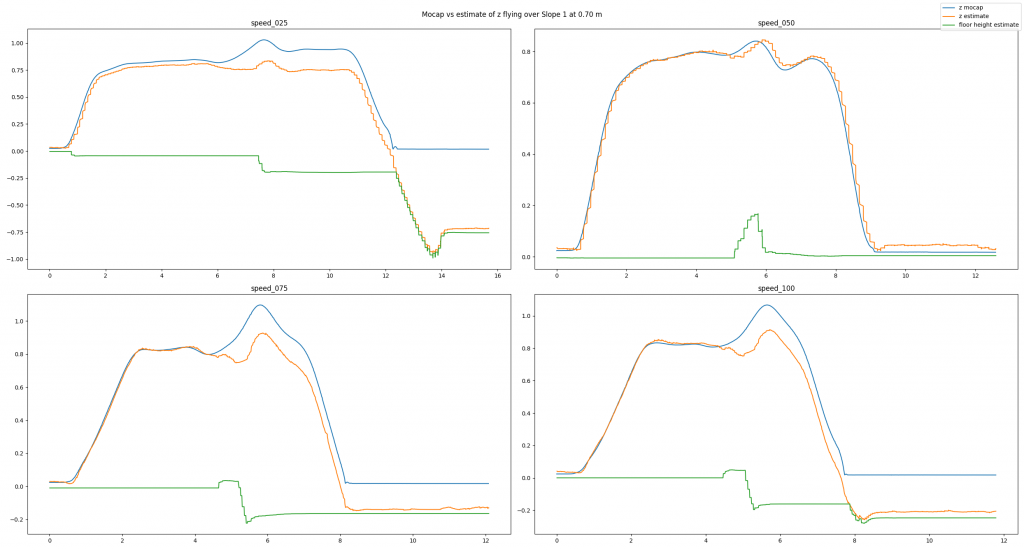

To analyze our approach we have used a Qualisys motion capture system. We have conducted many different tests: flying over different obstacles, flying at different velocities, flying at different altitudes, or even flying under different lighting conditions. Exemplarily, in this post we will have a look at a baseline example, a good estimate, and a bad estimate. In each picture you can see the altitude (in meters) over time (in seconds) for different flight speeds (in centimeters per second). You will see three lines: The motion capture ground truth (blue), the altitude estimated by our code (orange), and the new state keeping track of the floor height (green). For each plot, the Crazyflie takes off, flies in positive x direction, and lands.

In the baseline experiment, it flies over a flat floor. Clearly, the altitude estimates follow the ground truth values well, and the floor is correctly estimated to be flat.

In the next example, we have added a box with an approximate height of 0.225 m and made the Crazyflie fly over it. Despite the obstacle the altitude estimates follow the ground truth values well. Note how the floor estimates indicates the shape of the box.

Because the algorithm is based on an edge detection, we had a hunch that smoothly changing obstacles will pose a problem. Indeed, the estimates can be messy as we see in the next example. Here, the Crazyflie flies over an orthogonal triangle, with the short leg at 0.23 m pointing upwards and the long leg with 0.65 m pointing in flight direction (thus forming a slope). For different flight speeds different the estimates turn out quite differently.

If you don’t like looking at plots, check out this video with some cool shots instead!

Conclusion

To summarize, we propose a solution for constant altitude flight with Crazyflies, using the Flow deck and the Multiranger deck. We have tested it successfully under various circumstances. Still, we see some potential for improvement, e.g. when dealing with slopes. In addition, the current implementation is quite a change to the original EKF, which poses a problem for integration.

Thus, a way forward can be an out-of-tree build to ease the use of the solution for the community. At SINTEF we certainly plan to deploy this code in all of our tests in 2022, which will hopefully allow us to gather more experience and thus find further ways to improve or tune the system.

We want to emphasize that this is not a perfect solution. That means a) you should use it with care and b) you are very much welcomed to contribute. E.g. feel free to chime in in the pull request, test the code in your environments, propose improvements, or implement an out-of-tree build! :) Maybe you can even come up with an alternative approach for constant altitude flights?

If you want to check out more of our work, visit our website. Also, keep reading this amazing blog from Bitcraze as we try to be back some day (if Bitcraze wants us hehe)!