As mentioned earlier, we will be attending ICRA 2019 and have started to work on the demo that we will run in our booth. The main features we are going to show this time are the Lighthouse positioning deck and the Crazyflie 2.1.

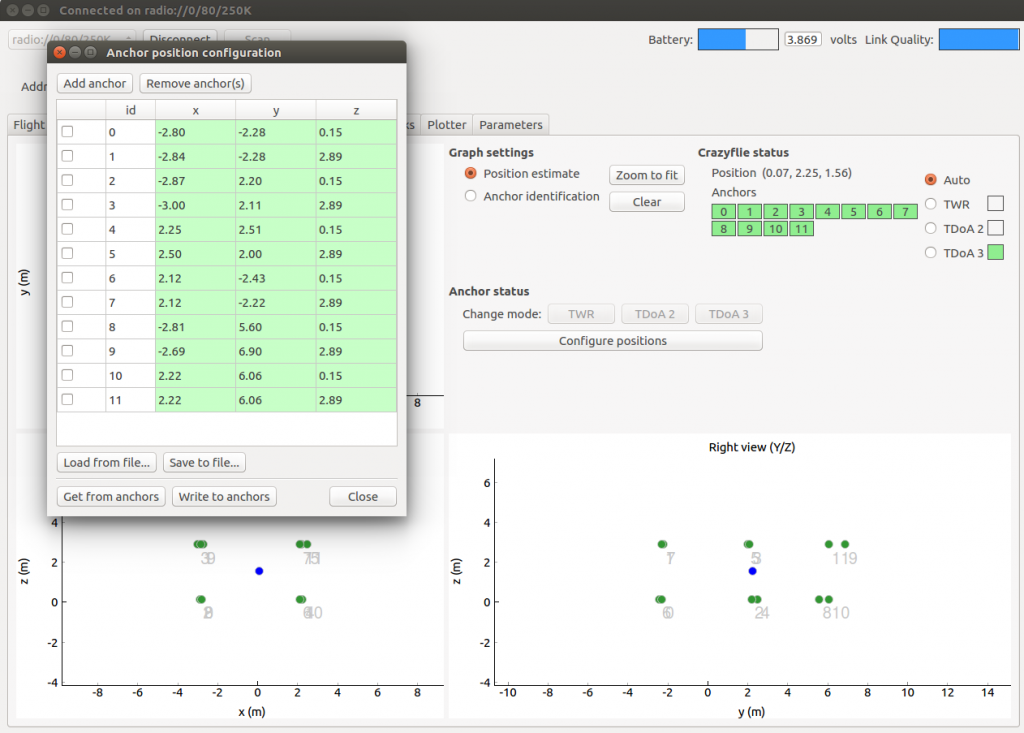

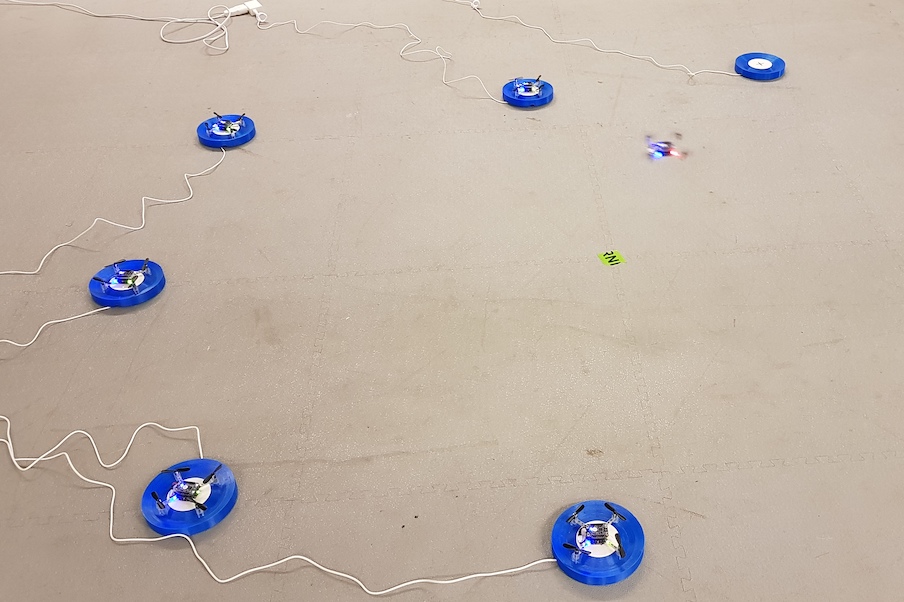

Running a demo with flying Crazyflies at a conference, usually means a lot of work with changing and charging batteries, starting demos and so on. This takes time and leads to less time to talk to all the interesting people that visit our booth. This year we are aiming at making the demo as fully automated as possible. We will have 8 Crazyflie 2.1 with Lighthouse and Qi charger decks, each with a charging pad. A computer will orchestrate the Crazyflies and make sure one is flying at all times while the others re-charge their batteries. When the battery of the flying Crazyflie is depleted it goes back to its pad while another one takes over.

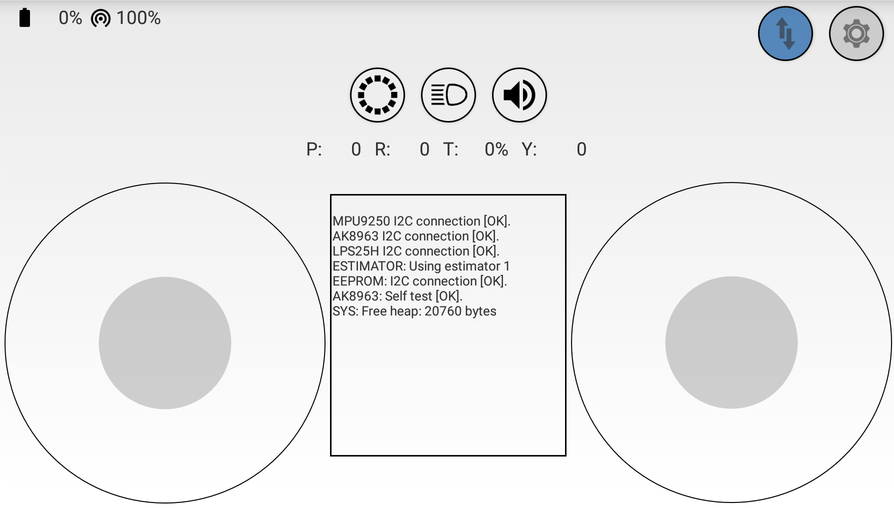

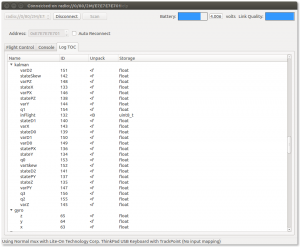

Most of the functionality is implemented in the Crazyflie firmware and it is pretty much autonomous after the trajectory is started. It will fly the trajectory over and over until it is low on battery, when it goes back to where it started from for recharging. Even though the Lighthouse positioning is very good, it sometimes slips off the charging pad when landing, so we have added a reposition feature to take off again and land if it is not charging after landing.

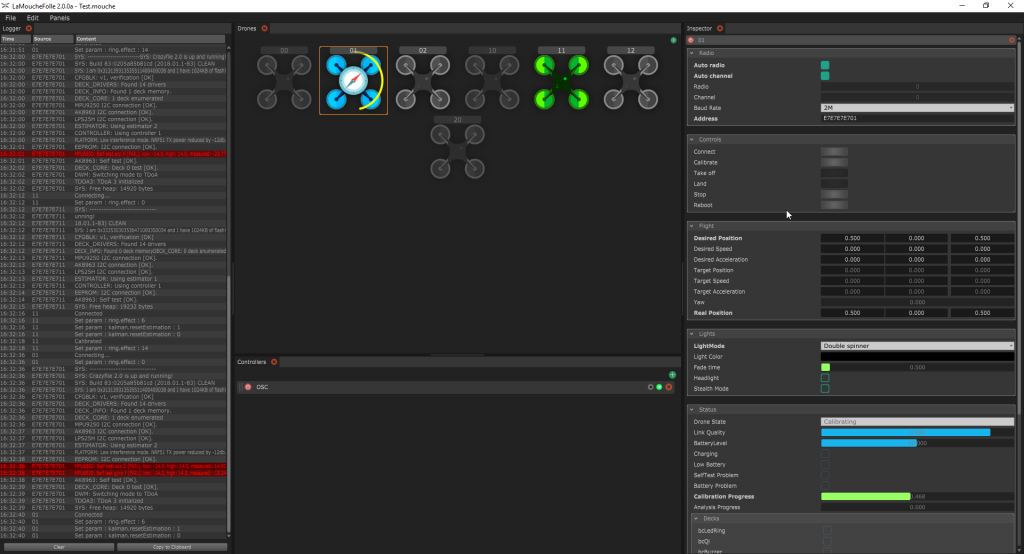

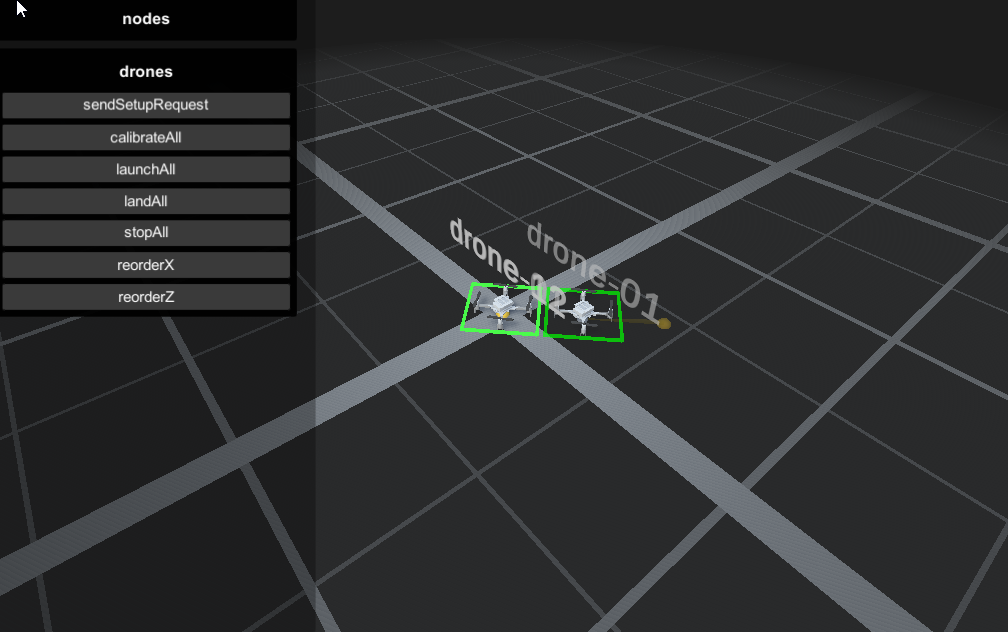

The orchestration computer (we call it the Control tower) is just keeping track of the state of the flying copter and starts a new one when the flying one goes back the the pad.

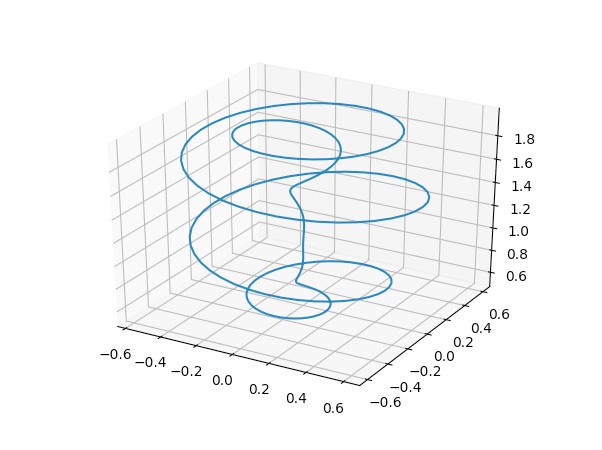

We are reusing the spiral trajectory from IROS last year and it has the property that it is possible to run up to 4 copters at the same time without colliding, if they are started at the correct times. There is a swarm feature in the Control tower that runs up to 4 Crazyflies with continuous replacements. The chargers are not fast enough to keep it going and it does not work as expected every time, but it is exciting with a few more Crazyflies buzzing around!

There are some bits and pieces left to implement, like crash detection, but the demo is mostly functional. If you want to play with the (slightly hackish) code, it is available at https://github.com/bitcraze/crazyflie-firmware-experimental/tree/icra-2019

You can find us in booth 101 at ICRA 2019, May 20 – 22. Drop by and say hi, check out the demo and tell us what you are working on. We love to hear about all the interesting projects that are going on. See you there!